| Key takeaway. Between January 2024 and March 2026, Google AI Overviews moved from appearing in roughly 6.5% of searches to appearing in 48%. Click-through rates for the top organic position have fallen by 34.5% to 61% on queries where an AI summary is shown. The ranking game has not ended; the rules have changed. Writers who treat content as a citable source for machines, while keeping a clear human voice and verifiable expertise, are the ones still gaining ground. |

Introduction: A Quiet Restructuring of the Open Web

Search has not died. It has been re-plumbed. Two years after Google began rolling out AI Overviews in May 2024, the way information moves from the web to the reader looks different from anything content teams trained on. Users still type queries into the search box. The ten blue links still exist. But for almost half of those queries, the answer now arrives before the user sees a single result link, written by a model and stitched together from sources that may or may not include the page that produced the underlying knowledge.

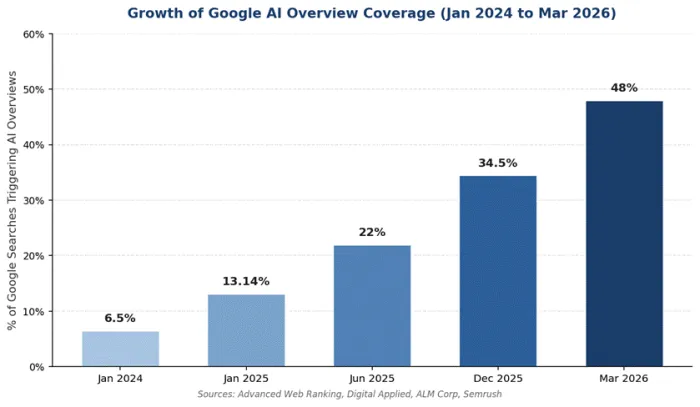

The numbers behind that shift are not small. According to Advanced Web Ranking and Digital Applied tracking, AI Overviews now appear in 48% of all U.S. search queries as of March 2026, up from 31% one year earlier and roughly 6.5% in early 2024 (theStacc, April 2026; Search Engine Journal, 2025). The Pew Research Center, in a study covering 68,000 real queries, found that the click-through rate on standard search results fell from 15% to 8% when an AI summary was present, a 46.7% relative decline (Pew Research, 2025). Seer Interactive, looking at 3,119 informational queries across 42 organisations between June 2024 and September 2025, measured a 61% drop in organic CTR on queries with AI Overviews (Seer Interactive, 2025).

This article looks at what has actually happened to rankings, traffic, and editorial workflows, using verifiable data from Pew, Ahrefs, Semrush, the Reuters Institute, the Professional Publishers Association, and Digital Content Next. It walks through three case studies (DMG Media, Chegg, and the wider mid-tier publisher tier), explains the mechanics of how AI Overviews and AI Mode select sources, and finishes with a working playbook for writers and editorial teams who want to remain visible in 2026 and beyond.

How the System Actually Works Now

The phrase "AI changed Google" gets used so loosely that it stops meaning anything specific. The useful version is more concrete. In 2026, a single Google query can now trigger five different surfaces above the traditional list of results: an AI Overview, an AI Mode response, a People Also Ask block, a paid ad cluster, and, on mobile, a featured snippet. Average AI Overviews are roughly 169 words long and link to about seven sources when expanded (Advanced Web Ranking, 2025). Once the AI Overview is open, the first organic result often appears around 1,674 pixels down the page, well below the fold on most screens (Search Engine Journal analysis).

That layout change matters because it converts a click problem into a citation problem. Either your domain is one of the seven sources cited inside the AI Overview, in which case the model carries some of your authority into its answer, or the user receives an answer assembled from competitors and never sees your listing. Ahrefs found that 76.1% of URLs cited in AI Overviews also rank in the top 10 of organic results, which means traditional SEO still feeds the funnel. But it is no longer the only door (Ahrefs, June 2025).

AI Mode and the rise of multi-source synthesis

Google AI Mode, the more aggressive conversational layer, behaves differently again. SE Ranking ran the same query three times through AI Mode and found that the responses overlapped with themselves only 9.2% of the time (SE Ranking, August 2025). When AI Mode is active, 93% of searches end without a click to an external website (theStacc, 2026). The model is not selecting one canonical winner; it is reweaving ten sources into a new paragraph each time. The implication for content creators is that being mentioned, accurately and consistently, across a wide set of credible third-party pages now matters as much as ranking on your own page.

The growth curve everyone is responding to

The figure below shows how quickly AI Overview coverage has expanded. The acceleration in the second half of 2025 is what tipped the issue from "interesting feature" to "editorial emergency" for many publishers.

Figure 1. AI Overview coverage as a percentage of all U.S. Google queries.

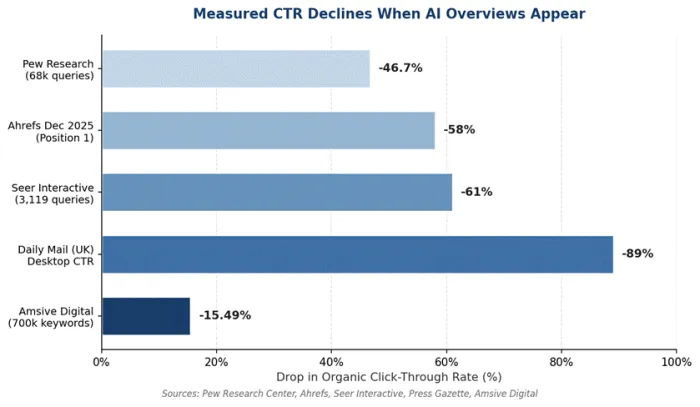

The Click-Through Rate Collapse

The most concrete way to describe the change is in click-through rate, the share of users who click any result on a search page. Multiple independent studies from 2024 through 2026 confirm a sharp decline whenever an AI Overview is present, although the size of the drop varies by methodology and query type.

Three studies in particular have shaped the industry conversation:

• Pew Research Center (March 2025): Across roughly 68,000 real Google searches by 900 U.S. consumers, click rates fell from 15% to 8% when an AI Overview was present, a 46.7% relative decline. Only 1% of users clicked on a link inside the AI Overview itself.

• Ahrefs (June 2025, updated December 2025): An analysis of 300,000 keywords found a 34.5% CTR drop for position-1 rankings in mid-2025, deepening to 58% by December 2025 as AI Overview presence grew.

• Seer Interactive (September 2025): Across 3,119 informational queries spanning 25.1 million organic impressions, organic CTR fell 61% (from 1.76% to 0.61%) on AI Overview queries. Paid CTR dropped even further, from 19.7% to 6.34%.

The variance between studies is not a contradiction. It reflects different keyword sets, different query types, and different tracking windows. The direction of every credible study points the same way: when an AI Overview appears, fewer users click anything below it.

Figure 2. CTR declines reported by major studies of AI Overview impact, 2025 to 2026.

There is a small but important counter-current inside this data. Brands that are themselves cited inside the AI Overview earn 35% more organic clicks and 91% more paid clicks than equivalent brands not cited (Seer Interactive). In other words, the citation slots inside the AI Overview have become a new premium real estate. For sites that earn one, the AI summary is not a thief; it is a referral. For sites that do not, the same summary is a wall.

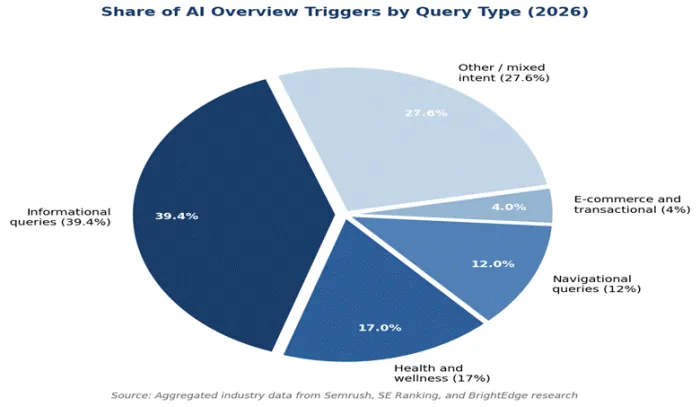

Where the Damage Is Concentrated: Query Type Matters

AI Overviews do not appear evenly. They are heavily skewed toward informational queries, where the user is asking the model to teach or explain rather than to navigate or transact. Semrush data from January 2026 shows that informational searches trigger AI Overviews 39.4% of the time, while e-commerce and transactional queries trigger them only about 4% of the time (Semrush; WordStream, April 2026).

This is the single most important fact for content strategists. The kind of writing most affected, top-of-funnel "what is" articles, definitions, basic how-tos, has lost the most ground. Bottom-of-funnel content, including case studies, pricing pages, comparison tables, and product reviews, has held up better and in some categories has gained ground because users still need to click to make a decision.

Figure 3. Distribution of AI Overview triggers by query type in 2026.

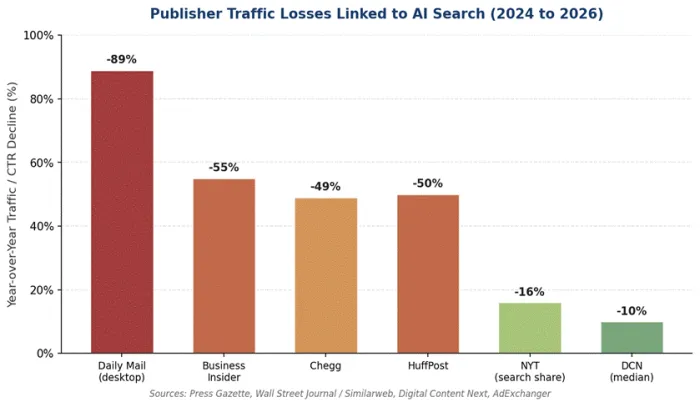

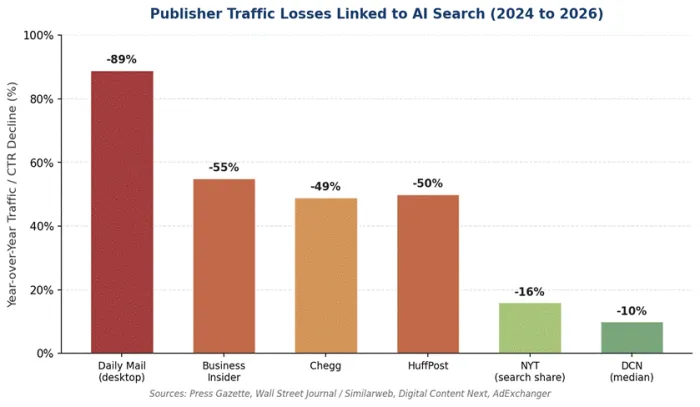

Industry-level data tells the same story from a different angle. Health queries trigger AI Overviews around 17% of the time, science roughly 16%, law and finance significantly higher than e-commerce. The Daily Mail, Business Insider, HuffPost, and Chegg each lost between 49% and 89% of their organic search performance on certain query types between mid-2024 and early 2026, while top-100 e-commerce sites saw far smaller effects (Press Gazette; Wall Street Journal via Similarweb; Chegg disclosure).

Three Case Studies in Adaptation

To understand the practical consequences, look at three named outlets whose data is publicly documented. Each represents a different category of impact and a different response.

Case study 1: DMG Media (Daily Mail, MailOnline, Metro)

DMG Media is the largest case study by sheer scale. In testimony submitted by the Professional Publishers Association to the UK's Competition and Markets Authority, the Daily Mail's director of SEO Carly Steven reported that the publication's desktop CTR dropped from 25.23% to 2.79% on queries where an AI Overview surfaced above a visible link, an 89% relative decline. Mobile traffic on the same queries fell roughly 87%. U.S. figures for the same outlet were similar (Press Gazette; AdExchanger, January 2026).

The numbers describe a worst-case scenario rather than an average across the publication. But they show how concentrated the damage can be on the exact informational queries where a major publisher historically earned its largest click volumes. In response, DMG has shifted editorial weight toward original reporting, branded shows, and direct subscription products, on the principle that an AI Overview cannot summarise an interview that exists nowhere else on the open web.

Case study 2: Chegg and the educational content trap

Chegg presents the cleanest story of structural disruption. The company filed a federal antitrust lawsuit against Google in February 2025 alleging that AI Overviews use Chegg's own educational content (worked solutions, study guides, and exam prep) to answer the same homework questions Chegg used to monetise. Chegg reported a 49% drop in organic search traffic in the year leading up to the suit, alongside a roughly 24% decline in subscriber numbers (Search Engine Journal, 2025; Reuters).

The Chegg case matters beyond Chegg. It is the first regulator-facing test of the question: if a model is trained on your content and then competes with you using paraphrased versions of that content, what is your remedy? The case is unresolved as of April 2026, but it has already shaped how educational publishers approach AI crawler permissions, content licensing, and direct relationships with AI providers.

Case study 3: The mid-tier publisher squeeze

The most overlooked story is what is happening to mid-tier sites, the ones ranked between roughly position 100 and 10,000 by traffic. Search Engine Land's January 2026 coverage of Graphite data found that the top 10 largest sites in the U.S. grew about 1.6% year over year, while the sharpest declines hit sites in that mid-tier band (Search Engine Land, January 2026; The Digital Bloom, March 2026).

Why? Three reasons. First, large sites have brand recognition that drives direct navigation. Second, large sites carry the entity authority that AI models prefer when selecting citation sources. Third, mid-tier publishers have historically earned a disproportionate share of their visits from informational long-tail queries, exactly the queries that AI Overviews now answer in place of the click. The Reuters Institute's Journalism and Technology Trends 2026 Report found that publishers expect an average 43% traffic decline over the next three years, with one-fifth expecting losses above 75% (Reuters Institute, 2026).

Figure 4. Documented year-over-year traffic and CTR declines at major publishers, 2024 to 2026.

Market Analysis: The Re-pricing of Visibility

If the click is becoming scarce, what is taking its place? Three market shifts explain where the value has moved.

Shift 1: Paid is absorbing what organic is losing

ALM Corp's February 2026 analysis of organic versus paid click share across nine industries showed that organic click share fell between 11 and 23 percentage points across every vertical measured, while paid (text ad) click share rose 7 to 13 points in the same categories. Search Engine Land summarised the trend as paid click share doubling while organic click share collapsed in AI-heavy queries (ALM Corp, February 2026; The Digital Bloom, 2026).

This is not just a Google revenue story. It tells content teams that the SERP is being repriced. Paid placement, which used to be a supplement to a strong organic position, is increasingly the only reliable click path on informational queries where the AI Overview answers the question.

Shift 2: Search demand is rising, not falling

Despite the publisher data, total search demand has actually grown. Graphite data covered by Search Engine Land found total search usage (combining traditional engines and LLM-based search) up roughly 26% worldwide and 16% in the U.S. between early 2025 and early 2026. Users are searching more, not less. The pie has gotten bigger, but the slices going to publisher websites have gotten thinner. The gain has gone to AI summaries, ChatGPT (now at roughly 800 million weekly active users), Perplexity (around 780 million queries a month), and to the few sites that earn citations across multiple platforms.

Shift 3: Citation share has become a measurable asset

Princeton researchers, who introduced the term Generative Engine Optimization in 2023, found that the top three GEO tactics (citing primary sources, adding statistics, and including direct quotations from named experts) lift AI visibility by 30 to 40% compared to unoptimised content (Princeton GEO research, via Digital Applied, 2026). Profound's March 2026 dataset shows that the top 10 domains take 46% of all ChatGPT citations within a topic, and the top 30 take 67%. Citation share, in other words, is concentrating in the same way that organic share concentrated a decade ago.

Google's March 2026 Core Update and the New Weight on Experience

On the algorithm side, the most consequential event of the past 12 months was the March 2026 core update. Industry analyses (Digital Applied, Evertune, Orange MonkE) describe the update as a re-weighting that elevated Experience above the other three E-E-A-T pillars. Sites with high domain authority but thin first-hand content lost ground to lower-authority sites whose contributors clearly demonstrated direct, named involvement with the subject matter (Digital Applied, March 2026; Evertune, 2026).

The pattern across winners and losers in March 2026 was consistent:

• Winners: named authors with verifiable credentials, original research, first-person case studies, original screenshots and data, and tightly defined topical authority within a single subject area.

• Losers: unattributed content, generic AI-generated overviews, affiliate review sites without original testing, and aggregator blogs covering many topics at shallow depth.

Google's public position on AI-generated content remains unchanged: the algorithm does not penalise content for being AI-assisted; it penalises content that lacks experience, expertise, originality, or oversight. A SEMrush analysis of more than 42,000 blog posts published in 2026 found that human-written or human-edited content held the number one position roughly 80% of the time, compared to 9% for purely AI-generated pages (SEMrush, 2026, via Website Content Writers). The differentiator is not the tool. It is what happens after the draft exists.

| What "experience" looks like in 2026. Not "this tool is useful for keyword research" but "I used it on a site with 4,000 indexed pages and found 340 pages competing for the same three keywords; here is how I resolved the cannibalisation." Specificity is hard to fabricate, and quality raters and AI models alike treat it as a trust signal. |

What Writers Must Do Differently in 2026

The good news is that the changes have a structure. The skills that earn citations, hold rankings, and survive core updates are not mysterious. They are the same disciplines that strong journalism, technical writing, and analyst research have always relied on, applied with awareness of how machines now read and reuse that work.

1. Write the answer in the first 100 words

Wix's March 2026 analysis of LLM citations found that 44.2% of all citations come from the first 30% of a page's text. AI systems do not read content the way users do; they look for compact, extractable answers. Lead each section with a direct, self-contained answer, then expand with context, evidence, and examples. Burying the answer below 600 words of preamble removes the page from the citation pool entirely.

2. Build for query fan-out, not single keywords

When a user asks an AI a complex question, the model breaks it into smaller sub-queries (often called fan-out queries) and searches each one separately. A query like "best email tool for a small e-commerce store under 10,000 subscribers" becomes three or four sub-searches. The page that ranks for all of them, or that is the most authoritative answer to the most specific sub-query, gets cited. Single-keyword targeting still helps for traditional rankings; fan-out coverage is what wins AI visibility.

3. Anchor every claim to a specific, dated source

Princeton's GEO study found that adding citations, dates, and statistics is the single most reliable way to improve citation rates in AI responses. Generic phrases like "studies have shown" lose to specific phrases like "a Pew Research study of 68,000 queries in March 2025 found...". The same principle that strengthens journalism credibility (named, dated, verifiable sources) also signals to AI models that the page is safe to quote.

4. Treat author identity as ranking infrastructure

Sites that added structured author pages with verifiable credentials, professional affiliations, and consistent bylines saw measurable ranking improvements within weeks of the March 2026 update (Digital Applied). Author identity is not a marketing nicety any more; it is part of how Google evaluates whether a piece of content can be trusted to rank, and how AI engines decide whose voice to pull into a synthesis.

5. Build content depth, not breadth

Semrush, Ahrefs, and Evertune all reached the same conclusion in 2026 analysis: domain-level topical authority outweighs page-level optimisation. A site that covers one subject thoroughly outperforms a site that publishes broadly across unrelated topics. Editorial calendars built around 50 deep articles in one vertical now compound faster than the same 50 articles spread across five verticals.

6. Stop blocking the AI crawlers you want citations from

This is the most overlooked technical issue of 2026. Cloudflare changed its default configuration in 2024 to block AI crawlers (GPTBot, ClaudeBot, PerplexityBot, Google-Extended). Many sites are blocking the exact bots they need to allow in order to be cited inside ChatGPT, Claude, and Perplexity. Audit robots.txt and CDN configurations and decide deliberately which AI crawlers you allow, rather than letting a default reject them.

7. Measure share of model, not just rank

Traditional SEO measures rank position and CTR. Neither of those metrics captures whether your brand is being mentioned inside ChatGPT, Gemini, or AI Overviews. Share of Model (the share of AI-generated responses that include your brand or content for a given prompt) has become the leading indicator of long-term visibility. Several tools, including Profound, Frase, and Brandlight, now track citation rates across multiple AI engines. Whichever method a team uses, the metric belongs in the same dashboard as organic traffic.

8. Make formats AI engines actually cite

Wix's March 2026 study of LLM citations found that listicles account for 21.9% of citations, articles for 16.7%, and product or comparison pages for 13.7%. The remaining citations are split across forum threads, reviews, and reference content. The format itself influences citation odds. A clear comparison table, a numbered list of options, or a structured FAQ converts to an AI citation more reliably than the same information in undifferentiated prose.

Figure 4. Documented year-over-year traffic and CTR declines at major publishers, 2024 to 2026.

Market Analysis: The Re-pricing of Visibility

If the click is becoming scarce, what is taking its place? Three market shifts explain where the value has moved.

Shift 1: Paid is absorbing what organic is losing

ALM Corp's February 2026 analysis of organic versus paid click share across nine industries showed that organic click share fell between 11 and 23 percentage points across every vertical measured, while paid (text ad) click share rose 7 to 13 points in the same categories. Search Engine Land summarised the trend as paid click share doubling while organic click share collapsed in AI-heavy queries (ALM Corp, February 2026; The Digital Bloom, 2026).

This is not just a Google revenue story. It tells content teams that the SERP is being repriced. Paid placement, which used to be a supplement to a strong organic position, is increasingly the only reliable click path on informational queries where the AI Overview answers the question.

Shift 2: Search demand is rising, not falling

Despite the publisher data, total search demand has actually grown. Graphite data covered by Search Engine Land found total search usage (combining traditional engines and LLM-based search) up roughly 26% worldwide and 16% in the U.S. between early 2025 and early 2026. Users are searching more, not less. The pie has gotten bigger, but the slices going to publisher websites have gotten thinner. The gain has gone to AI summaries, ChatGPT (now at roughly 800 million weekly active users), Perplexity (around 780 million queries a month), and to the few sites that earn citations across multiple platforms.

Shift 3: Citation share has become a measurable asset

Princeton researchers, who introduced the term Generative Engine Optimization in 2023, found that the top three GEO tactics (citing primary sources, adding statistics, and including direct quotations from named experts) lift AI visibility by 30 to 40% compared to unoptimised content (Princeton GEO research, via Digital Applied, 2026). Profound's March 2026 dataset shows that the top 10 domains take 46% of all ChatGPT citations within a topic, and the top 30 take 67%. Citation share, in other words, is concentrating in the same way that organic share concentrated a decade ago.

Google's March 2026 Core Update and the New Weight on Experience

On the algorithm side, the most consequential event of the past 12 months was the March 2026 core update. Industry analyses (Digital Applied, Evertune, Orange MonkE) describe the update as a re-weighting that elevated Experience above the other three E-E-A-T pillars. Sites with high domain authority but thin first-hand content lost ground to lower-authority sites whose contributors clearly demonstrated direct, named involvement with the subject matter (Digital Applied, March 2026; Evertune, 2026).

The pattern across winners and losers in March 2026 was consistent:

• Winners: named authors with verifiable credentials, original research, first-person case studies, original screenshots and data, and tightly defined topical authority within a single subject area.

• Losers: unattributed content, generic AI-generated overviews, affiliate review sites without original testing, and aggregator blogs covering many topics at shallow depth.

Google's public position on AI-generated content remains unchanged: the algorithm does not penalise content for being AI-assisted; it penalises content that lacks experience, expertise, originality, or oversight. A SEMrush analysis of more than 42,000 blog posts published in 2026 found that human-written or human-edited content held the number one position roughly 80% of the time, compared to 9% for purely AI-generated pages (SEMrush, 2026, via Website Content Writers). The differentiator is not the tool. It is what happens after the draft exists.

| What "experience" looks like in 2026. Not "this tool is useful for keyword research" but "I used it on a site with 4,000 indexed pages and found 340 pages competing for the same three keywords; here is how I resolved the cannibalisation." Specificity is hard to fabricate, and quality raters and AI models alike treat it as a trust signal. |

What Writers Must Do Differently in 2026

The good news is that the changes have a structure. The skills that earn citations, hold rankings, and survive core updates are not mysterious. They are the same disciplines that strong journalism, technical writing, and analyst research have always relied on, applied with awareness of how machines now read and reuse that work.

1. Write the answer in the first 100 words

Wix's March 2026 analysis of LLM citations found that 44.2% of all citations come from the first 30% of a page's text. AI systems do not read content the way users do; they look for compact, extractable answers. Lead each section with a direct, self-contained answer, then expand with context, evidence, and examples. Burying the answer below 600 words of preamble removes the page from the citation pool entirely.

2. Build for query fan-out, not single keywords

When a user asks an AI a complex question, the model breaks it into smaller sub-queries (often called fan-out queries) and searches each one separately. A query like "best email tool for a small e-commerce store under 10,000 subscribers" becomes three or four sub-searches. The page that ranks for all of them, or that is the most authoritative answer to the most specific sub-query, gets cited. Single-keyword targeting still helps for traditional rankings; fan-out coverage is what wins AI visibility.

3. Anchor every claim to a specific, dated source

Princeton's GEO study found that adding citations, dates, and statistics is the single most reliable way to improve citation rates in AI responses. Generic phrases like "studies have shown" lose to specific phrases like "a Pew Research study of 68,000 queries in March 2025 found...". The same principle that strengthens journalism credibility (named, dated, verifiable sources) also signals to AI models that the page is safe to quote.

4. Treat author identity as ranking infrastructure

Sites that added structured author pages with verifiable credentials, professional affiliations, and consistent bylines saw measurable ranking improvements within weeks of the March 2026 update (Digital Applied). Author identity is not a marketing nicety any more; it is part of how Google evaluates whether a piece of content can be trusted to rank, and how AI engines decide whose voice to pull into a synthesis.

5. Build content depth, not breadth

Semrush, Ahrefs, and Evertune all reached the same conclusion in 2026 analysis: domain-level topical authority outweighs page-level optimisation. A site that covers one subject thoroughly outperforms a site that publishes broadly across unrelated topics. Editorial calendars built around 50 deep articles in one vertical now compound faster than the same 50 articles spread across five verticals.

6. Stop blocking the AI crawlers you want citations from

This is the most overlooked technical issue of 2026. Cloudflare changed its default configuration in 2024 to block AI crawlers (GPTBot, ClaudeBot, PerplexityBot, Google-Extended). Many sites are blocking the exact bots they need to allow in order to be cited inside ChatGPT, Claude, and Perplexity. Audit robots.txt and CDN configurations and decide deliberately which AI crawlers you allow, rather than letting a default reject them.

7. Measure share of model, not just rank

Traditional SEO measures rank position and CTR. Neither of those metrics captures whether your brand is being mentioned inside ChatGPT, Gemini, or AI Overviews. Share of Model (the share of AI-generated responses that include your brand or content for a given prompt) has become the leading indicator of long-term visibility. Several tools, including Profound, Frase, and Brandlight, now track citation rates across multiple AI engines. Whichever method a team uses, the metric belongs in the same dashboard as organic traffic.

8. Make formats AI engines actually cite

Wix's March 2026 study of LLM citations found that listicles account for 21.9% of citations, articles for 16.7%, and product or comparison pages for 13.7%. The remaining citations are split across forum threads, reviews, and reference content. The format itself influences citation odds. A clear comparison table, a numbered list of options, or a structured FAQ converts to an AI citation more reliably than the same information in undifferentiated prose.