The state of content in 2026

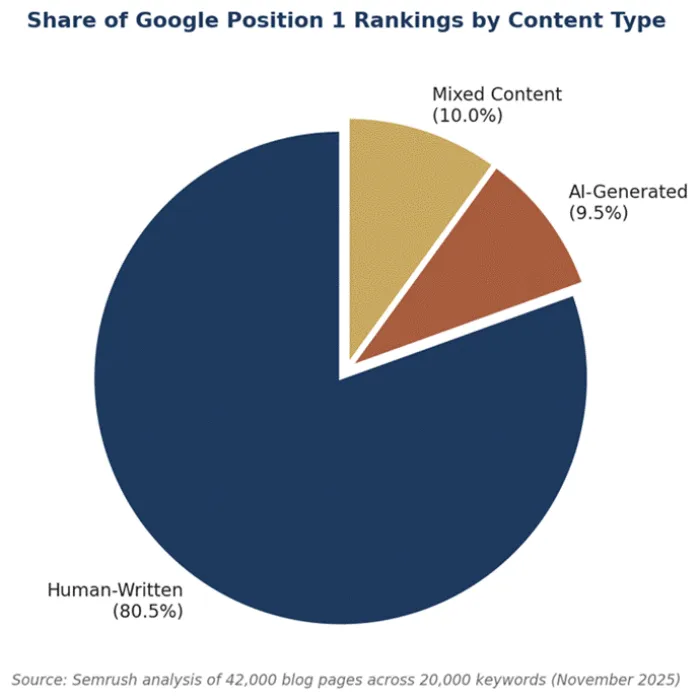

In November 2025, Semrush ran one of the largest empirical tests yet conducted on artificial intelligence and search rankings. Researchers pulled the top ten Google results for 20,000 keywords, parsed 42,000 blog pages, and ran every single one through an AI detection model. The verdict was striking. Pages classified as fully human-written occupied the number one position 80.5 percent of the time. Purely AI-generated content held that top slot in just 9.5 percent of cases. Human-written pages were eight times more likely to claim the most valuable real estate on the search results page.

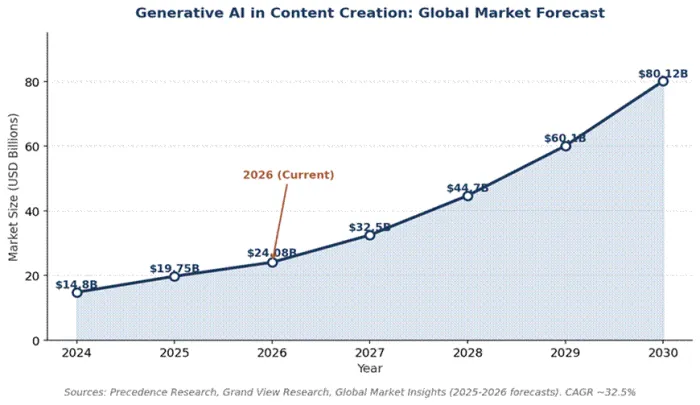

That gap matters because the economics of search have shifted. Generative AI is no longer the curiosity it was when ChatGPT launched in late 2022. The global generative AI market reached an estimated 53.7 billion dollars in 2025 and is projected to climb past 91 billion in 2026, with content creation accounting for the largest single application at roughly 35.7 percent of total spend. The tools have proliferated. Costs have dropped. Adoption has gone vertical. According to industry surveys, 85 percent of marketers now use AI for content creation tasks, up from 61 percent in 2023, and 97 percent plan to use it in some form during 2026.

Yet the evidence keeps pointing in the same direction. The teams winning at search, at conversion, and at brand trust are not the ones publishing the most AI text. They are the ones blending machine speed with human judgment. The case for hybrid workflows is no longer aspirational. It is documented in ranking data, reflected in case studies across multiple industries, and reinforced by Google's own quality guidelines. This article examines what the evidence actually shows, why the gap exists, where pure AI publishing has caused real damage, and what a defensible content workflow looks like for the year ahead.

A market saturated with AI text

The first thing worth understanding is the sheer volume of AI-assisted writing entering the open web. Industry analyses from late 2025 estimated that roughly 74 percent of newly indexed web pages contain at least some AI-generated text. Around 8 to 10 percent of blog content sampled by Semrush was classified as fully AI-generated, and that share has been rising steadily across multiple independent measurements throughout 2024 and 2025.

Spending data confirms the pattern. United States enterprise investment in generative AI tripled from 11.5 billion dollars in 2024 to 37 billion dollars in 2025, and 86 percent of enterprises reported plans to increase AI budgets further in 2026. Content tools deliver some of the highest reported returns within that spend. Industry research places average ROI on AI content tooling at roughly 420 percent when measured against time and cost savings, the highest of any single AI application category.

Figure 1. Global generative AI in content creation forecast, 2024 to 2030.

All of that creates a competitive problem rather than a competitive advantage. When 97 percent of content marketers are using the same tools to draft the same kinds of articles, generic AI output stops being a differentiator. It becomes commodity supply. Anyone who has searched for a buying guide or a how-to article in the past year has likely felt the result. Pages blur together. Phrasing repeats. Conclusions feel hedged and abstract. This is what content strategists have started calling AI homogenization, and it has practical consequences for everything from organic traffic to email open rates.

Key reading of the market AI writing is no longer an edge. It is table stakes. The new edge sits in the layer above the AI: the strategy, the source material, the editorial judgment, and the brand voice that turn raw output into something a reader actually values. Volume has lost its leverage. Quality has reclaimed it. |

What search engines actually reward

Google has been deliberate about its public position on AI content. In a Search Central blog post first published in February 2023 and repeatedly reaffirmed since, the company stated that appropriate use of AI is not against its guidelines. The same post made the boundary equally clear. Using automation to generate content with the primary purpose of manipulating search rankings is a violation of spam policy. The framework that Google uses to evaluate quality has since solidified around the four-letter acronym EEAT: Experience, Expertise, Authoritativeness, and Trustworthiness. Every one of those signals is easier for human writers to demonstrate than for an unsupervised model to fabricate.

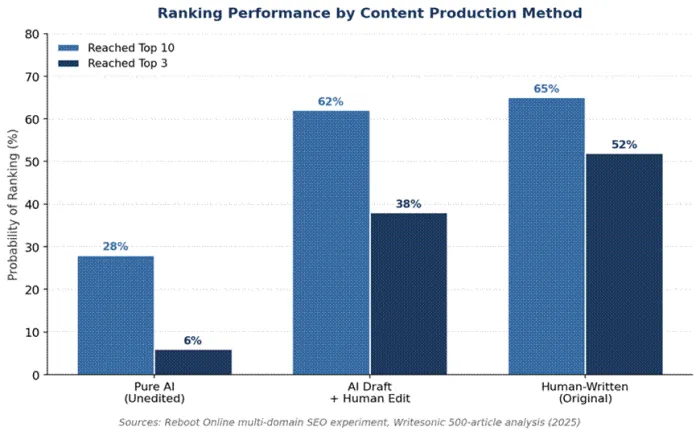

Independent ranking studies have stress-tested that framework against real data. The Semrush analysis cited above remains the largest published test, but it is far from the only one. A multi-domain experiment by Reboot Online used 25 paired test sites, each running an AI version and a human version of the same article. AI-only pages reached the top ten in 28 percent of test cases. They reached the top three in just 6 percent. The same study found AI-generated content ranked lower on average in 21 of the 25 head-to-head matchups.

Figure 2. Distribution of position 1 rankings by content classification.

A separate analysis by Writesonic looked at more than 500 AI-assisted articles across multiple verticals and found that pieces edited by humans after AI drafting ranked 34 percent higher on average than unedited AI output. Bounce rates were also lower on the hybrid pages, indicating that readers were not just landing on them, they were staying.

Figure 3. Ranking probability rises sharply when human editing is added to AI drafts.

The picture from these studies is consistent. Pure AI can compete on page one. It struggles to win the top three. The closer a query gets to high-stakes decisions, the wider the gap becomes. Behavioural research from Brandwell.ai supports the why behind that gap. In a blind test, readers actually rated AI-written articles slightly higher on clarity and flow. But when the same readers were asked which version they would trust for important financial or health decisions, 72 percent said the human-written version. Perceived authority survives editorial polish. It does not survive the suspicion of pure machine origin.

AI Overviews changed the game, then changed it again

Search results have not stood still during this period. Google's AI Overviews now appear on more than 60 percent of queries, up from roughly 25 percent in mid-2024. When an AI Overview is present, organic click-through rates drop sharply. Studies across 2025 reported declines of up to 70 percent in clicks on traditional listings when the Overview occupied the top of the page. That has changed where the value is. Getting cited inside an AI Overview is now nearly as important as ranking below it. Cited pages reportedly earn 35 percent more organic clicks and 91 percent more paid clicks than competitors who are not cited.

Citation patterns inside AI Overviews follow the same logic that drives traditional ranking. Analyses of millions of AI Overview citations through 2025 and into early 2026 found that pages cited by Google's AI tend to be content-rich, freshly updated, well-cited internally, and demonstrably tied to a specific author or organisation. In other words, they look like the work of editors who actually know the subject. Generic AI text struggles to clear that bar because it offers no first-hand observation, no proprietary data, and no trail back to a verifiable expert.

Case studies: where pure AI broke, and where hybrid won

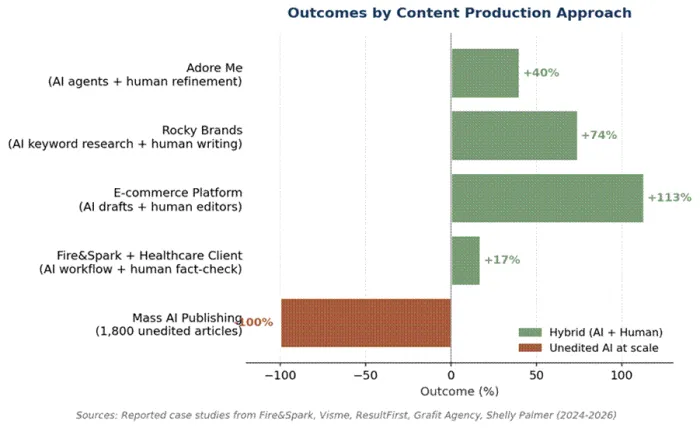

Statistics describe the shape of the problem. Case studies show what it looks like in practice. The past three years have produced enough public examples to draw clear patterns. The pattern that recurs most often is straightforward. Companies that lean on AI as a drafting and research partner, with experienced editors handling fact-checking and voice, have grown traffic and revenue. Companies that treat AI as a replacement for the editorial layer have produced content disasters that are still being cleaned up.

Figure 4. Reported outcomes from public case studies, 2024 through early 2026.

Case 1: CNET and Bankrate, the cautionary template

In late 2022, CNET began quietly publishing AI-generated financial explainers under the byline CNET Money Staff. Sister site Bankrate did the same. The program was uncovered by Futurism in January 2023. CNET's own internal audit, conducted after the discovery, found that 41 of the 77 published AI articles contained errors. Some were minor. Others were not. One widely-cited example mistakenly told readers that a 10,000 dollar deposit at 3 percent interest would yield 10,300 dollars in a year, when the correct figure for interest earned is 300 dollars. The Washington Post described the affair as a journalistic disaster. The Los Angeles Times observed that the same behaviour from a human writer would result in firing or expulsion.

Both publications paused their AI programs, then quietly restarted them. One of the first new Bankrate pieces published after the restart contained another factual error and was deleted within hours of journalists pointing it out. The lesson here is not that AI cannot help with financial explainers. It is that volume publishing without expert review, in a category where accuracy carries real consequences, will eventually produce content that fails publicly and damages the brand carrying it.

Case 2: The 1,800-article experiment

A widely discussed case from 2024, documented across multiple SEO industry blogs, involved a content site that mass-published roughly 1,800 AI articles in a short window. Initial indexing was rapid. Traffic spiked to around 3.6 million quarterly visits. Within a few months, Google issued a manual action and traffic collapsed to nearly zero. A separate 16-month experiment reported by Search Engine Land followed a similar arc. Roughly 71 percent of automated pages indexed within 36 days. They generated an opening burst of 122,000 impressions and 244 clicks. Within three months, visibility had fallen to about 3 percent of its peak. The pages were still indexed. They had simply lost relevance in the eyes of the algorithm once novelty wore off and quality signals settled.

Case 3: Adore Me and the agent-assisted approach

Adore Me, a direct-to-consumer apparel brand, took a different path. Rather than treating AI as a publish-now solution, the team built role-specific AI agents inside Writer's AI Studio. One agent drafted SEO product descriptions in the brand's tone. Another handled Spanish translations for the company's Mexico launch. A third drafted personalised stylist notes that human stylists then refined before sending to customers. The reported outcomes were measurable. Stylist note writing time fell by 36 percent. Product description batches that previously took 20 hours now took 20 minutes. Localised launch time dropped from months to 10 days. Most importantly for organic visibility, the company reported a 40 percent increase in non-branded SEO traffic. AI accelerated the work. Humans owned the quality bar.

Case 4: Rocky Brands and the keyword-research lift

Footwear company Rocky Brands provides another data point. The company applied AI tools to keyword research, content optimisation, and SERP analysis, but kept human writers in charge of the actual prose. Reported results from the program included a 30 percent increase in search-driven revenue, 74 percent year-over-year revenue growth, and a 13 percent rise in new users. The pattern is identical to Adore Me's. AI handled the discovery and structural work. Humans handled the persuasion.

Case 5: Fire&Spark and high-accuracy verticals

An especially instructive case involves a healthcare technology client of agency Fire&Spark. The client needed to produce state-by-state regulatory content for nurse practitioners and physician assistants, a category where a single inaccuracy could expose readers to legal risk. The agency built a workflow that used AI for drafting and structural scaffolding, then layered AI-powered fact-checking on top, and required citation links to regulatory sources at the bottom of every page. A human subject matter expert reviewed each piece before publication. The result was 200 citation-backed FAQ pages, zero accuracy issues reported, and 17 percent more traffic in three months. The takeaway is direct. In high-stakes categories, hybrid workflows are not a nice-to-have. They are the only model that scales without breaking trust.

What the case studies have in common Across every documented success, AI handled research, scaffolding, draft generation, and routine optimisation. Humans handled the editorial layer, the fact-checking, the voice, and the strategic framing. Across every documented failure, that division of labour collapsed and AI was asked to carry both halves of the workload. |

Why the hybrid model wins on more than just rankings

The ranking advantage is the most measurable benefit, but it is far from the only one. Hybrid workflows produce better outcomes across at least four dimensions, each of which compounds over time.

Trust signals that AI cannot manufacture

Google's quality framework gives explicit weight to first-hand experience. The first E in EEAT stands for Experience, and the search quality rater guidelines now explicitly instruct evaluators to look for evidence that the author has actually used the product, visited the location, or worked in the field. AI cannot have lived experience. It can simulate the surface of one, but the simulation breaks under specificity. A travel article that mentions exactly which side of the train carriage gets the better view between Florence and Venice carries an authority signal that no language model produces unprompted. Readers respond to that signal. So do search algorithms.

Original data, original perspective

Industry analyses through 2025 found that content demonstrating original research, proprietary data, or distinctive analytical perspective ranked up to 40 percent higher than purely synthesised AI text on the same topics. Original research is also the strongest single driver of natural backlinks. A single linked citation from an authoritative publication can lift a domain's overall trust score in ways no amount of AI-assisted volume publishing replicates. Hybrid workflows make original data feasible because the AI absorbs the time-cost of formatting, polishing, and cross-checking, leaving humans with bandwidth to do the actual research.

Editorial judgment under uncertainty

Models still hallucinate. The frequency has dropped considerably across model generations, but the failure mode persists, and it tends to surface most often on exactly the topics that matter most: medical claims, legal interpretations, financial calculations, and current events. A human editor catches these errors not because they are smarter than the model, but because they bring context the model lacks. They notice when a cited statistic does not match its supposed source. They flag when an argument contradicts itself between paragraphs. They recognise when a recommendation reflects outdated industry practice. None of that is exotic editorial work. It is just the basic discipline of treating an AI draft as a draft.

Brand voice and reader connection

Voice is the most underrated piece of the puzzle. Readers can usually tell when content has no human behind it, even when they cannot articulate why. The cadence flattens. The examples feel hypothetical rather than lived. The opinions hedge. Brands that win in 2026 are the ones whose content sounds like a specific person from a specific company talking, not a generalised competence speaking from nowhere. Achieving that requires a human pass on every meaningful piece of published content, however efficient the drafting stage becomes.

The right human-AI workflow for 2026

Knowing that hybrid workflows outperform pure AI is only useful if there is a concrete way to operate one. The teams reporting the strongest results in industry surveys converge on a similar shape, even when they describe it in different vocabularies. The following six-stage workflow distils the common pattern.

Stage 1: Strategy and brief

Before any draft is generated, a human defines the audience, the search intent, the angle that distinguishes the piece from the existing top ten, and the original element that justifies its existence. AI can assist with competitive SERP analysis at this stage, surfacing what is already ranked and what gaps exist, but the brief itself is a human decision. A vague brief produces a vague draft regardless of how capable the model is.

Stage 2: Research and source assembly

Modern AI research tools shine in this stage. They can synthesise dozens of sources in minutes, surface relevant statistics, and structure outlines. The non-negotiable rule is that every quoted figure or factual claim eventually traces back to a verifiable primary source, not to the model's recall. Treat AI research output as a starting list of leads, not as the final answer.

Stage 3: Drafting

This is where AI delivers its most reliable productivity gains. Studies report drafting time reductions of 40 to 60 percent for skilled operators. The draft should be treated as raw material rather than near-final copy. Aim for completeness and accurate scaffolding, not polish.

Stage 4: Editorial pass

A human editor, ideally one with subject matter knowledge, rewrites the draft in the brand's voice, removes generic AI phrasing, adds first-hand observation and proprietary data, and tightens the argument. This stage is where most of the perceived quality lift happens. It is also where most pure AI workflows fail by skipping or shortcutting this work.

Stage 5: Fact-check and citation

Every claim that involves a number, a date, a name, or a specific assertion of fact gets verified against an external source. For high-stakes verticals, this stage should be a separate pass by a different person from the editor. AI tools can assist by flagging unsupported claims, but the verification itself remains a human task.

Stage 6: Optimisation and publication

AI returns to active duty in the final stage. SEO tools generate metadata suggestions, structured data scaffolds, internal linking recommendations, and image alt text. Authorship metadata, including a real byline tied to a real person, is added before publication. Disclosure of AI assistance is included where readers would reasonably expect it, in line with Google's stated guidance.

The shortest version of the workflow Humans set the strategy and own the editorial layer. AI handles research, scaffolding, drafting, and post-production polish. Every published page passes through at least one expert human before it goes live. Skip that final pass and the entire model collapses back into the pure AI mode that the data already shows underperforms. |

Common mistakes teams still make in 2026

Even teams that intellectually accept the hybrid model often fall into predictable traps when implementing it. A few of the most common are worth naming.

•Treating AI output as near-final. The draft is a draft. The editorial pass is not optional. Teams that skip it under deadline pressure produce the kinds of articles that show up in case studies as cautionary examples.

•Optimising for word count instead of insight. Long, padded articles do not rank better than short, dense ones in 2026. The data shows the opposite. Specificity beats length, and AI bias toward verbose answers needs active editorial pruning.

•Ignoring the byline. Generic byline labels like Editorial Team or Site Staff suppress trust signals. Real names with real credentials lift both reader trust and search visibility.

•Skipping disclosure. Readers and search engines both reward transparency. A simple line acknowledging that AI assisted with research and drafting, followed by human review, costs nothing and earns goodwill.

•Using AI to manufacture experience. Asking a model to write in the first person about a product it has never used does not create authority. It creates a credibility risk waiting to surface. Real experience needs to be sourced from real people.

Where this leaves content teams

The narrative around AI and writing has matured considerably since the first wave of ChatGPT enthusiasm. The early framing pitted machines against humans and asked which one would win. The data from 2024 through early 2026 has retired that framing. The teams winning at content right now are not picking sides. They are building production systems where each layer does what it is best at. Models draft, research, and optimise at machine speed. Editors, subject experts, and brand owners shape the voice, verify the facts, and contribute the original observation that algorithms cannot replicate.

The ranking evidence is clear. Human-written content takes 80.5 percent of position one results. Hybrid content ranks 34 percent higher than unedited AI on average. AI-only content can reach page one but rarely the top three. Pure AI publishing at scale has produced traffic collapses, manual penalties, and brand damage in case after documented case. The market for AI writing tools is on track to pass 91 billion dollars in 2026, but commodity output from those tools is not where competitive advantage lives. The advantage sits in the editorial layer that turns generic drafts into content readers actually trust.

For content teams figuring out how to operate in this environment, the practical steps are not exotic. Build a brief before any draft is generated. Treat AI output as raw material. Keep an experienced human editor in the loop for every published piece. Verify facts independently. Put real names on real bylines. Pick a small set of tools that fit the workflow rather than letting the workflow bend to whatever tool is most fashionable that quarter. The brands that follow this discipline are the ones currently posting double-digit traffic and revenue gains. The ones that skip it are the ones whose deindexation stories show up in next year's case studies.

Tooling matters too, even if it matters less than the discipline behind it. The most useful platforms in 2026 are the ones that recognise the hybrid reality rather than fighting it. Writers increasingly want a single environment where AI drafting, source-aware research, citation handling, plagiarism checking, and human editing live together rather than fragmenting across half a dozen tabs. Platforms like Writenexa have leaned into that integrated model, building toward a workspace where the AI produces and the writer shapes, with the friction between those two stages reduced as much as possible. That is the shape the category is converging toward, and content teams evaluating their stack for the year ahead will benefit from picking tools that treat editorial judgment as a first-class part of the writing process rather than an afterthought.

The conclusion the data keeps repeating is the simple one. Pure AI scales output. Pure human scales quality. Combining them is not a compromise. It is the production model that wins on rankings, on trust, and on revenue, and the evidence for that has been accumulating long enough that 2026 is no longer the year to debate it. It is the year to operate on it.